Q&A With Gaurav Malhotra: What Can the Human Brain Teach Us About Artificial Intelligence?

By Erin Frick

ALBANY, N.Y. (March 19, 2026) — During middle school, Gaurav Malhotra’s physics teacher took notice of his eclectic interests in formal disciplines like physics and math, as well as more abstract fields like philosophy. He introduced Malhotra to the works of philosopher Bertrand Russell, who focused on the philosophy of the mind. This recommended reading ultimately shaped Malhotra’s academic trajectory.

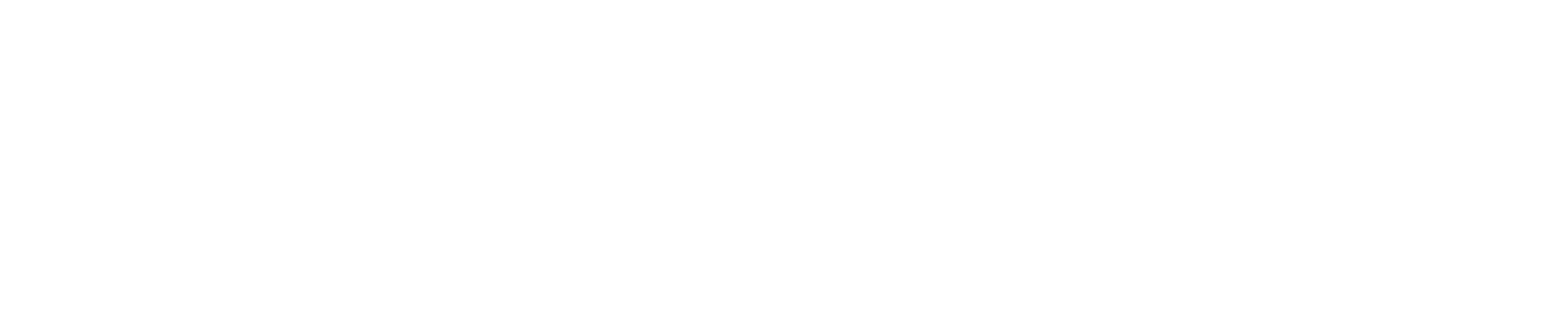

Part of the University at Albany’s artificial intelligence cluster hire, Assistant Professor Malhotra joined the Department of Psychology at the College of Arts and Sciences in 2024 after completing postdoctoral research at the University of Bristol in the U.K., and Aix-Marseille University in France.

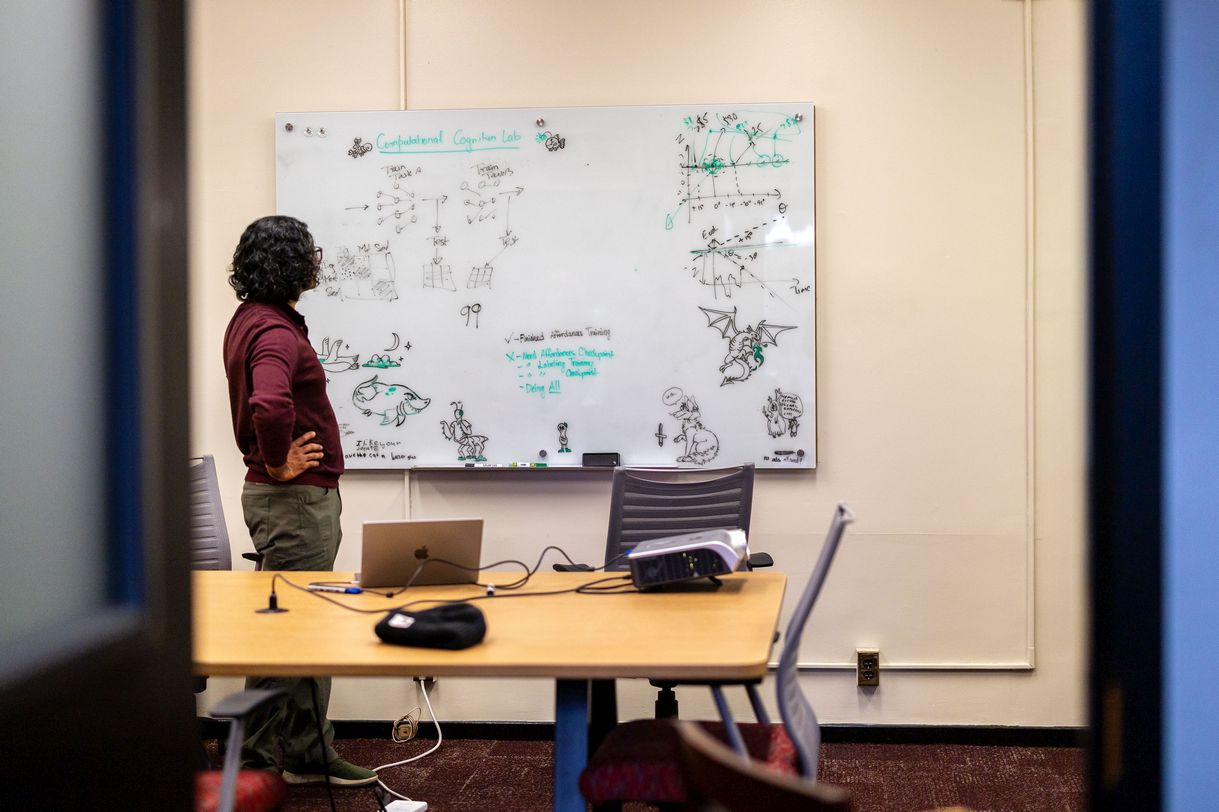

Today, Malhotra directs the Computational Cognition Lab where he combines human behavioral experiments with computational models to understand how our environment and biology shape human vision and decision-making.

We sat down with Malhotra to learn more about his path, current work and research he plans to expand at UAlbany.

How did you arrive at the intersection of computer science, psychology and neuroscience?

As a young student, I was equally interested in how the human mind works, and also tangible ways to measure and study it. I saw computer science as a field where I could pursue both. During my undergraduate studies, I became especially interested in artificial intelligence because it merged the philosophy of the mind with my existing skills in informatics and computer programming.

After working for several years as a software engineer, I decided to pursue postgraduate studies in cognitive science at the Informatics Institute at the University of Edinburgh in Scotland. At that point, about 14 years ago, AI models had advanced to the extent that people were beginning to believe they might actually be able to reflect some of the cognitive processes at play within the human mind and yield real insight into how human cognition works.

This cascade of events led me to pursue twin research strands: using AI models to understand human cognition and using experiments in human psychology to understand how AI models work. This intersection is important because many popular AI models can do all sorts of fancy, complex things, but the truth is, we don’t actually know how they are able to do what they do. Psychologists have spent a long time trying to understand another such black box — the human mind. Perhaps we can use approaches used in psychology to not only design better AI systems but also understand current systems better.

What are you studying now?

My overall research agenda focuses on understanding how the environment shapes our cognitive mechanisms. I'm particularly interested in cognitive parameters — the internal states that govern how we perform various cognitive tasks. These parameters appear in models of attention, memory, decision-making, visualization, language processing and more. We suspect that these parameters are adaptable and adjust to the environment, either through evolution or by learning how to perform different tasks. I'm interested in understanding the mechanisms behind this adaptation, whether it is optimal, and what tradeoffs shape these parameters in complex environments.

What can we learn by studying how our brains visualize the world?

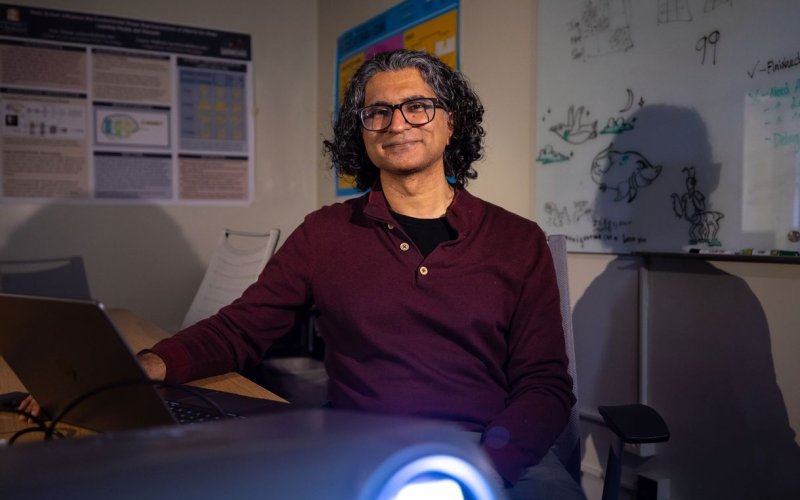

Despite decades of research in human cognition, we still don’t fully understand how the brain represents objects. In my lab, we study how the human visual system works and what parallels exist between human perception and representations produced by AI models.

Human visual representation demonstrates capabilities that AI still struggles with. Take for example, infants, who have only seen things in the real world and have never seen a cartoon or a line drawing. If you show them a cartoon or a line drawing of an elephant, they can very easily identify it as an elephant even when it’s missing important visual features. AI systems can’t decipher this sort of abstraction without being trained on line drawings first.

I’m currently working with an undergraduate student on a study examining how object utility shapes mental representations. We created a set of novel “Franken-objects” that no one has seen before, some of which are assigned specific functions. After participants learn the objects and their uses, we present distorted versions and ask whether they look familiar and what they can do. Their responses reveal which features were most important in forming their mental representations.

A major challenge for current AI systems is they require an enormous amount of energy. Humans, by contrast, can learn these novel objects and their uses quickly in our lab on the energy budget of a sandwich. Understanding how the brain enables this efficiency could help guide the development of more energy-efficient AI systems.

How can we improve AI systems by better understanding human decision making?

People steadily accumulate information from the environment and when they feel they have enough information in favor of one option or the other, they decide. Our research probes this process, including how we create mental shortcuts to help us make decisions. Understanding how we form these shortcuts could answer questions about AI systems — both how they work and what sorts of processes are more efficient than others.

The goal is to develop AI systems that are more aligned with the way human cognition works. This could also give us more control over what AI systems can do and help us create more effective guardrails for safe AI use.

What determines where we allocate our mental energy?

Perhaps unsurprisingly, our research shows that humans often make decisions that are less than optimal, yet we've been very successful as a species. Why have humans evolved to be non-optimal? My hypothesis is that when cognitive problems become demanding, people shift toward strategies that conserve mental energy. In other words, we constantly manage a cognitive “energy budget.”

Opportunity costs influence this. I've observed that people think: if I spend too much time on this question, then I will have less time to spend on a future problem. There's a famous parable about Buridan's donkey, who couldn't decide whether to eat hay from one pile or another, and because of this indecision, it died of hunger. We don't want to find ourselves in a situation where indecision costs us something we need.

This suggests that a control process helps us decide how much effort to spend on a task and when to disengage. I’m interested in understanding how this mechanism works.

AI systems are designed to solve tasks, but not to determine the most efficient way to approach them. Understanding how humans manage this type of effort could help build AI systems that regulate their own computational resources.

There are also potential implications for mental health treatment. For example, it is common for people suffering from depression to get caught in problematic thoughts that worsen their condition. Understanding the cognitive mechanism that traps them in these thoughts could inform strategies to help them disengage.

What drew you to UAlbany?

I was initially attracted by the research happening in cognitive psychology. I saw right away that there were several people working in areas that overlap with my own who I could potentially collaborate with. Then, when I came to interview, I had the most fantastic experience. I found this really warm, welcoming department, and that collegial energy confirmed my decision to join. I grew up in a small town in the mountains of India, so I also liked that nature is so accessible here in Albany.

What are you excited to pursue here?

I'm currently expanding my research in several directions. I'm working with students from both psychology and information sciences to explore how AI models process information compared to humans. For example, we're investigating why both humans and large language models like ChatGPT take longer to respond to certain types of reasoning problems.

What's particularly fascinating is that when you ask AI models to show their internal reasoning process, they don't just say, “Two plus two is four.” Instead, they might say, “Two plus one is three, and three plus one is four. But wait — maybe the person asking me this is trying to trick me. Sometimes people say two plus two is five and that means something else.” It sounds almost neurotic, but I'm deeply interested in understanding what internal processes lead to these elaborate reasoning chains.

I'm also exploring practical applications for interventions that can help people change or control their decision-making patterns. Fundamentally, I'm trying to understand the mechanisms that allow humans to navigate complex cognitive landscapes so efficiently — both to advance our understanding of the human mind, and to better understand and wield AI systems.