Challenging Image Manipulation Detection (CIMD)

The ability to detect manipulation in multimedia data is vital in digital forensics. Existing Image Manipulation Detection (IMD) methods are mainly based on detecting anomalous features arisen from image editing or double compression artifacts.

All existing IMD techniques encounter challenges when it comes to detecting small tampered regions from a large image. Moreover, compression-based IMD approaches face difficulties in cases of double compression of identical quality factors.

To investigate the State-of-The-Art (SoTA) IMD methods in those challenging conditions, we introduce a new Challenging Image Manipulation Detection (CIMD) benchmark dataset, which consists of two subsets, for evaluating editing-based and compression-based IMD methods, respectively.

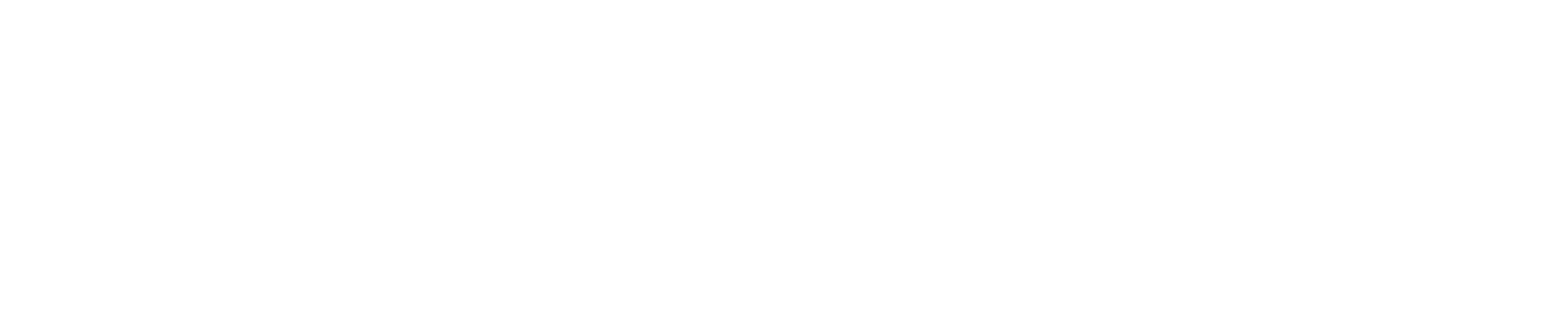

The dataset images were manually taken and tampered with high-quality annotations. In addition, we propose a new two-branch network model based on HRNet that can better detect both the image-editing and compression artifacts in those challenging conditions.

Extensive experiments on the CIMD benchmark show that our model significantly outperforms SoTA IMD methods on CIMD.

Read our research paper, A New Benchmark and Model for Challenging Image Manipulation Detection.

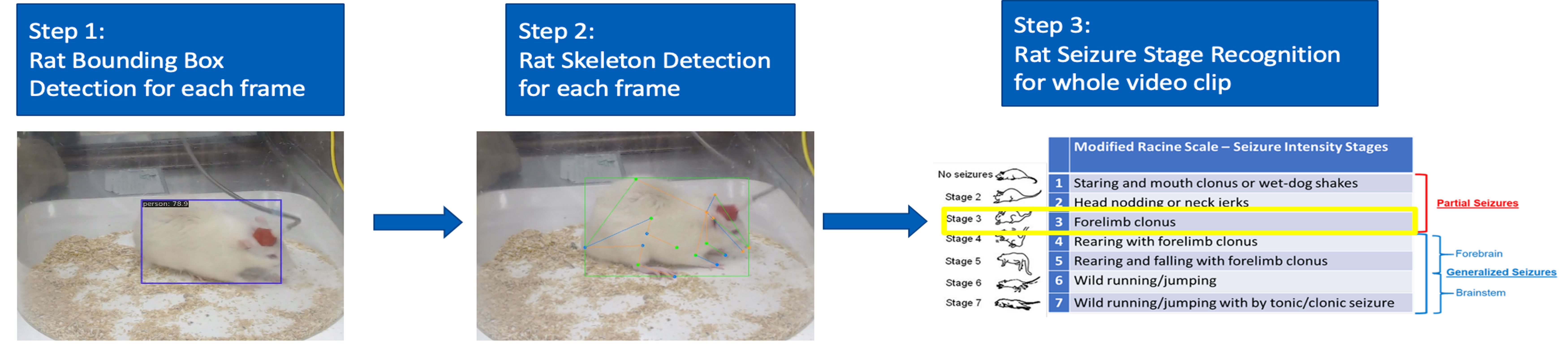

Skeleton-based Human Action Recognition

Human action recognition is an important but challenging problem. With advances in low-cost sensors and real-time joint coordinate estimation algorithms, reliable 3D skeleton-based action recognition is now feasible.

We are interested in using recursive neural network (RNN) models to solve skeleton based human activity recognition problem.

Single & Multiple Object Tracking

Object tracking, which aims to ex-tract trajectories of single or multiple moving objects in a video sequence, is a crucial step in understanding and analyzing video sequences.

A robust and reliable tracking system is the basis for a wide range of practical applications ranging from video surveillance and autonomous driving to sports video analysis.

We have developed several object tracking algorithms based on the hyper-graph formalism and provided a benchmark dataset for multi-object tracking performance evaluation.

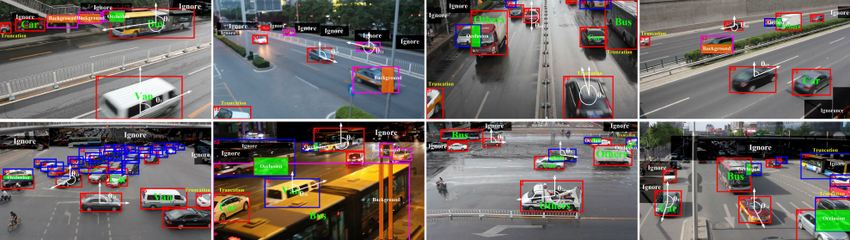

UA-DETRAC Benchmark Dataset for Multi-Object Tracking

UA-DETRAC is a challenging real-world multi-object detection and multi-object tracking benchmark. The dataset consists of 10 hours of videos captured with a Cannon EOS 550D camera at 24 different locations at Beijing and Tianjin in China.

The videos are recorded at 25 frames per seconds (fps), with resolution of 960 x 540 pixels. There are more than 140 thousand frames in the UA-DETRAC dataset and 8,250 vehicles that are manually annotated, leading to a total of 1.21 million labeled bounding boxes of objects.

We also perform benchmark tests of state-of-the-art methods in object detection and multi-object tracking, together with evaluation metrics detailed in our work.